What Telegram Bots Can Be Built with Cocoon: Practical Use Cases for Durov’s Private AI

Data privacy has become a new competitive advantage. Cocoon + Telegram: use cases for legal, HR, healthcare, finance, and crypto.

2026-05-09

166

Authorization is required only to use the service «Team energy»

Enter the e-mail you provided during registration and we will send you instructions on how to reset your password.

An error occurred while processing your request. Please try again later. If the problem persists, please contact our support team.

Local AI models, agents, and a private knowledge base in one device. CORE ONE shows what personal AI infrastructure could look like in just three years.

Remember how Tony Stark interacts with Jarvis in “Iron Man”? It is not just a chatbot in a browser, but a constant intelligence built into the space around him: a system that knows the data, manages the processes, and helps make decisions in real time.

Until recently, AI worked according to a completely different model: open ChatGPT, ask a question, get an answer. But as neural networks begin to take part in analytics, automation, and task management, the “browser tab” format gradually stops being enough.

If AI becomes part of daily work, should it remain an external cloud service dependent on someone else’s servers?

It is against this background that the direction of personal AI infrastructure begins to take shape — local AI systems owned by the user or the company themselves.

This is how the CORE ONE concept emerged — an AI speaker with a built-in local AI server, which turns the idea of a personal AI assistant from science fiction into a real tool for business and automation.

The CORE ONE concept represents an innovative approach to personal AI infrastructure, moving away from the traditional cloud model. Its architecture is designed to ensure maximum confidentiality, data control, and deep integration into business workflows. Unlike conventional AI services that function as a subscription to an external cloud resource, CORE ONE is positioned as an autonomous local device capable of processing and storing information directly at the user or the company.

1. Hardware Layer

CORE ONE is conceived as a compact device, outwardly resembling a smart speaker, but with significantly expanded capabilities. Inside the body is a full-fledged local AI server optimized to perform resource-intensive artificial intelligence tasks. This includes:

2. Software stack and Core AI Engine

The functionality of CORE ONE is based on its software stack, which includes several key components working in synergy to create a full-fledged AI operator:

3. Security and confidentiality

One of the central ideas of CORE ONE is local-first AI*, which implies the maximum level of security and control over data. All critically important information is processed and stored locally, eliminating the need for constant transfer of confidential data to external cloud storage. This reduces the risks of cyberattacks and unauthorized access, and complies with strict privacy requirements, which is especially important for businesses working with sensitive information.

* Local-first AI is an AI approach in which the model and data processing work locally on the user’s device, rather than only through cloud servers.

4. Integration capabilities

CORE ONE is designed with deep integration into the existing business IT infrastructure in mind. It can act as an internal AI assistant working with the company’s local knowledge base, helping employees search for information in documents, analyze internal data, or automate repetitive processes. Integration capabilities include:

Thus, the CORE ONE architecture is a comprehensive solution that transforms AI from a simple tool into a full-fledged, secure, and personalized operator, deeply integrated into business processes and working under the full control of the user.

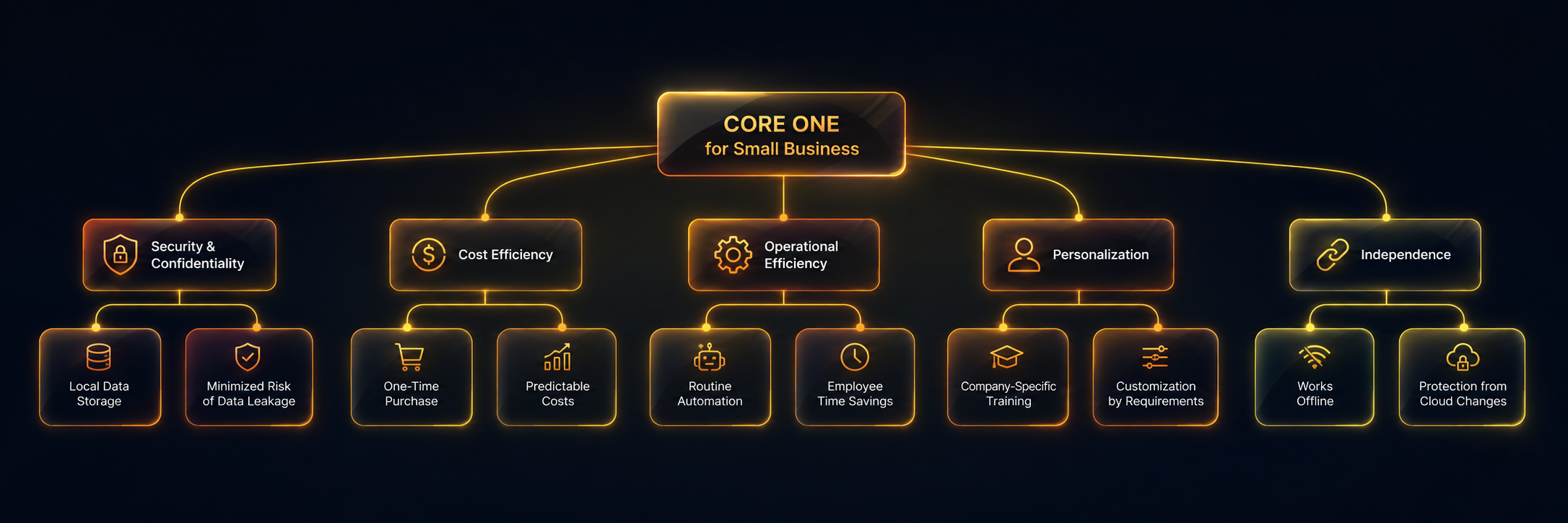

For small businesses, where every resource counts and data confidentiality plays a critically important role, CORE ONE offers a number of unique benefits that can significantly increase the efficiency and security of operations. Moving away from the model of dependence on cloud services, CORE ONE gives small enterprises control and flexibility previously available only to large corporations.

1. Data security and confidentiality

One of the key advantages of CORE ONE for small business is local storage and processing of data. Amid growing cyber threats and stricter requirements for the protection of personal data (for example, GDPR, HIPAA), small enterprises often face difficulties in ensuring an adequate level of security when using cloud AI services. CORE ONE allows storing all sensitive information — customer databases, financial reports, trade secrets — directly on the user’s device. This minimizes the risks of leaks, unauthorized access, and provides full control over data, which is critically important for maintaining customer trust and complying with regulatory standards.

2. Cost efficiency and predictability of expenses

The subscription model for cloud AI services can be unpredictable and expensive for small business, especially when scaling usage. CORE ONE offers a one-time device purchase model, which makes the costs of AI infrastructure more transparent and predictable. The absence of monthly or annual payments for using AI models and storing data allows small business to plan the budget more efficiently and avoid hidden expenses related to usage volume or data transfer.

3. Increased operational efficiency

Thanks to its AI agents and workflow automation capabilities, CORE ONE is able to significantly optimize routine business processes. Small business often suffers from a lack of staff and time, which leads to employee overload and decreased productivity. CORE ONE can take on tasks such as:

This allows small business employees to focus on more strategic and creative tasks, increasing overall productivity and competitiveness.

4. Personalization and adaptation to business needs

Unlike universal cloud solutions, CORE ONE offers deep personalization and adaptation to the specific needs of small business. The ability to use local LLM models and a RAG knowledge base allows training AI on the company’s internal data, creating a truly unique and effective assistant. This means that the AI operator will understand the specifics of the business, its terminology, internal processes, and customer base, providing more accurate and relevant answers and solutions. Small business gets a tool that grows and develops together with it, becoming an integral part of its digital ecosystem.

5. Independence from external services and stability of operation

Dependence on external cloud providers can create risks related to service outages, changes in pricing policy, or even discontinuation of support. CORE ONE ensures independence and stability of operation, since the main functionality is concentrated locally. This guarantees uninterrupted access to AI capabilities even in the absence of an internet connection (for internal tasks) and protects the business from sudden changes in the terms of use of external platforms. Small business gets a reliable tool that is always at hand and works in accordance with its own rules.

Privacy. Business does not want to hand its data over to the cloud. GDPR, corporate policies, common sense.

API cost. Cloud AI expenses grow exponentially with scale. Local models — you buy them once and they work forever.

Autonomy. Dependence on a provider = business risk. The server is down — the business is down.

Speed. Local processing without network latency — instant response.

In 3–5 years every home and office will have: a Wi-Fi router, NAS storage, a Smart TV, and an AI server. CORE ONE is a candidate for that position.

Regulatory pressure is growing: fines under GDPR, HIPAA, and the EU AI Act for personal data leaks have multiplied. In 2025 alone, regulators issued more than €1.2 billion in fines. Business is actively looking for compliant solutions with guaranteed data confidentiality.

| Revenue stream | Description | Model |

|---|---|---|

| Hardware | Sale of the CORE ONE device | $999–$1,999 per unit |

| Agent Store | Marketplace of AI agents (SEO, Trading, Marketing, Research, etc.) | 20–30% commission on sales |

| Business SaaS | AI operator for business: SEO Manager, Support Manager, Analyst | $49–$299/month subscription |

| Crypto Integration | On-chain agent payments, autonomous AI economies in TRON | Transaction fees |

The model is similar to Apple: you sell the hardware + the ecosystem (Agent Store = App Store for AI agents). The main income comes from the marketplace and subscriptions, not from hardware.

CORE ONE is not a serial product, but a concept of how personal AI systems may look in the coming years: local models, AI agents, voice interaction, an own knowledge base, and autonomous operation within a single AI infrastructure.

Elements of such systems are already technically feasible today — especially for tasks related to analytics, content, internal knowledge bases, AI assistants, and automation of business processes.

At the same time, ready-made universal solutions for such scenarios are still practically nonexistent. In most cases, such AI systems require an individual architecture, integrations, and adaptation to the specific business.

We view CORE ONE as a concept and a development direction that can be implemented for various company tasks:

If your company is interested in such solutions, we can design and develop an AI system for specific processes, data, and use cases — from an MVP and prototype to a full-fledged local-first AI platform.

No. CORE ONE is a concept of an AI system and a development direction showing what personal AI infrastructure may look like in the coming years: local AI models, AI agents, voice control, and an own AI environment inside a single device.

Yes. We can design and develop an AI system based on such concepts for specific company tasks: from local AI assistants and AI speakers to internal AI platforms with agents, analytics, a knowledge base, and process automation.

Such solutions are especially interesting for businesses that work with internal data, analytics, documents, CRM, or confidential information. These can be agencies, e-commerce, fintech, law firms, SaaS projects, and corporate teams.

The following can be adapted for a specific business:

The architecture and functionality are selected individually for the company’s processes.

Regular AI services work through the cloud and depend on external infrastructure. Local-first AI assumes that the main data processing, AI models, and the knowledge base work locally within the device or the company’s infrastructure. This provides more control, privacy, and customization capabilities.

Yes. Such AI platforms can be integrated with CRM, ERP, internal databases, documents, analytical systems, and external service APIs. It is precisely the integrations and custom logic that become a key part of developing such solutions.

Most companies have their own processes, data structure, employee roles, and automation scenarios. Therefore, universal AI services rarely fully address the real tasks of the business. That is why more and more companies are considering custom development of AI systems for their infrastructure and work processes.